Bug Report: Proxmox VE Special Agent fails completely when a single cluster node is down

Product: Checkmk 2.4.0p15 (CRE)

Component: agent_proxmox_ve (Proxmox VE Special Agent)

Environment: Proxmox VE 3-node cluster (one node down)

Severity: High – complete monitoring failure of otherwise healthy cluster

1. Summary

When one Proxmox VE node in a cluster is down, the Checkmk Proxmox VE Special Agent completely aborts, even though the remaining nodes are fully online and functional.

This results in:

-

No data from any node

-

All Proxmox-related services in Checkmk showing:

(Service Check Timed Out) -

Loss of monitoring for the entire cluster, even though only one element is faulty

This behaviour is incorrect and constitutes a functional bug.

2. How to reproduce

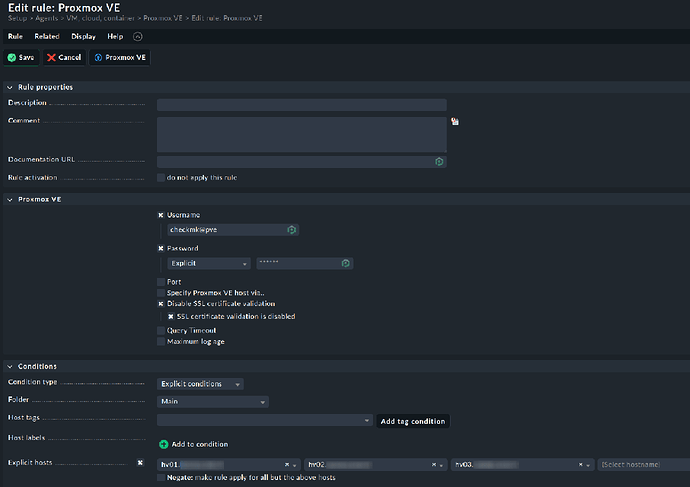

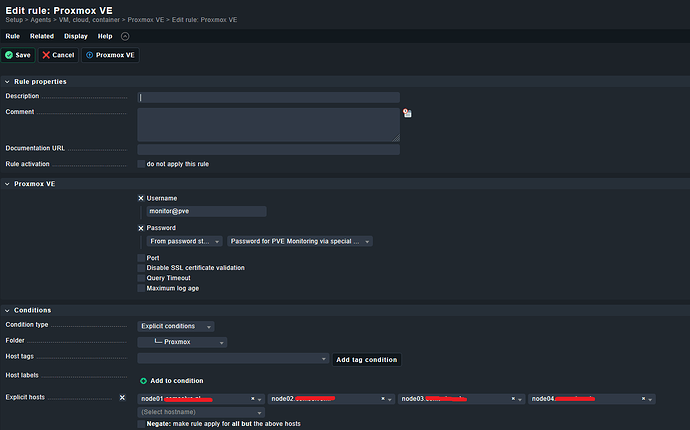

Cluster setup

-

PVE cluster with 3 nodes:

-

hv01– online -

hv02– online -

hv03– offline (hardware failure)

-

Steps

-

Power off one PVE node completely (no network, no API, no corosync).

-

Keep the Checkmk Proxmox datasource rule unchanged (default: query all nodes).

-

Run the Special Agent manually:

share/check_mk/agents/special/agent_proxmox_ve \ -u <user> -p <pass> --no-cert-check \ --timeout 10 \ <any-cluster-node-ip>

Actual output

Read timeout after 10s when trying to GET nodes/hv03/lxc

Checkmk GUI

All cluster-related hosts show:

Service Check Timed Out

3. Expected behavior

-

The Special Agent must continue even if one node does not respond.

-

All reachable nodes should still be queried.

-

Only the data for the failed node should be missing, degraded, or WARN/UNKNOWN.

-

Monitoring of a healthy majority of nodes should not fail because one node is down.

4. Actual behavior

-

The Special Agent tries to recursively fetch all API paths, including:

/nodes/<node>/lxc /nodes/<node>/qemu /nodes/<node>/version ... -

When a single node does not respond,

requestsraises aReadTimeout. -

In

get_api_element(), this error is not caught:raise CannotRecover(f"Read timeout after {self._timeout}s when trying to GET {path}") -

The entire agent quits → no data output at all → Checkmk interprets as a timeout.

This is a design flaw for any clustered or HA environment.

5. Technical root cause (based on code analysis)

Key line in get_api_element()

except requests.exceptions.ReadTimeout:

raise CannotRecover(f"Read timeout after {self._timeout}s when trying to GET {path}")

This escalates a single-node timeout into total agent failure.

Recursive tree building

get_tree() walks all nodes and VM paths without error isolation per node.

One failed request aborts the entire recursion.

6. Impact

-

Monitoring of multi-node PVE clusters becomes unstable.

-

A single down node → all nodes unmonitored

-

No cluster data, no VM data, no node metrics

-

Not acceptable for production or HA environments

7. Proposed fix (minimal, non-breaking)

Add per-node error handling in rec_get_tree():

- response = self._session.get_api_element("/".join(map(str, next_path)))

+ try:

+ response = self._session.get_api_element("/".join(map(str, next_path)))

+ except CannotRecover as e:

+ LOGGER.warning("Skipping subtree %s due to error: %s", next_path, e)

+ return {} # or an empty structure

Rationale:

A single dead node must not cause complete data loss for an entire cluster.

8. Verification data (for developers)

-

Special Agent output:

Read timeout after 10s when trying to GET nodes/hv03/lxc -

journalctl -u pveproxyon Proxmox:proxy detected vanished client connection -

pvecm statusconfirms nodehv03is offline:Nodes: 2 (expected 3) -

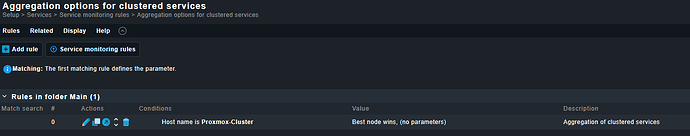

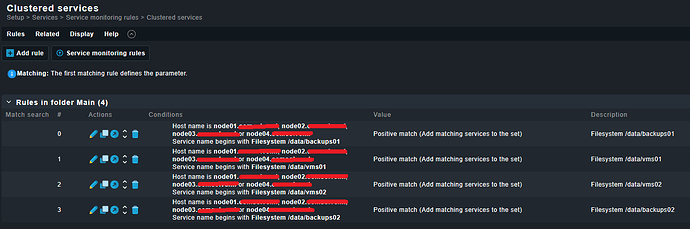

Screenshot (Checkmk GUI):

All PVE hosts show

(Service Check Timed Out).

9. Request

Please classify this as a bug and implement per-node exception handling so that the Special Agent:

-

continues collecting data from reachable nodes,

-

gracefully handles down nodes,

-

and outputs partial results instead of nothing.

This behavior is crucial for stable monitoring of clustered Proxmox environments.

If desired, I can also provide packet captures, debug logs, or help test a patched version.

Communication preferably in German if possible.