Hi Leon

Thanks for your time:

There is a sudden jump of 30 secs halfway through the replication disks listing.

Sep 16 15:02:28 11: ID = res_15

Sep 16 15:02:28

Sep 16 15:02:28 LUN = sv_14

Sep 16 15:02:28

Sep 16 15:02:28 Name = ADC_Datastore_4_replicated

Sep 16 15:02:28

Sep 16 15:02:28 Description =

Sep 16 15:02:28

Sep 16 15:02:28 Type = Primary

Sep 16 15:02:28

Sep 16 15:02:28 Base storage resource = res_15

Sep 16 15:02:28

Sep 16 15:02:28 Source =

Sep 16 15:02:28

Sep 16 15:02:28 Original parent =

Sep 16 15:02:28

Sep 16 15:02:28 Health state = OK (5)

Sep 16 15:02:28

Sep 16 15:02:28 Health details = "The component is operating normally. No action is required."

Sep 16 15:02:28

Sep 16 15:02:28 Storage pool ID = pool_1

Sep 16 15:02:28

Sep 16 15:02:28 Storage pool = Pool1

Sep 16 15:02:28

Sep 16 15:02:28 Size = 2199023255552 (2.0T)

Sep 16 15:02:28

Sep 16 15:02:28 Maximum size = 70368744177664 (64.0T)

Sep 16 15:02:28

Sep 16 15:02:28 Thin provisioning enabled = yes

Sep 16 15:02:28

Sep 16 15:02:28 Data Reduction enabled = yes

Sep 16 15:02:28

Sep 16 15:02:28 Data Reduction space saved = 639144820736 (595.2G)

Sep 16 15:02:28

Sep 16 15:02:28 Data Reduction percent = 50%

Sep 16 15:02:28

Sep 16 15:02:28 Data Reduction ratio = 1.99:1

Sep 16 15:02:28

Sep 16 15:02:28 Advanced deduplication enabled = yes

Sep 16 15:02:28

Sep 16 15:02:28 Current allocation = 597795602432 (556.7G)

Sep 16 15:02:28

Sep 16 15:02:28 Preallocated = 137080946688 (127.6G)

Sep 16 15:02:28

Sep 16 15:02:28 Total Pool Space Used = 646213713920 (601.8G)

Sep 16 15:02:28

Sep 16 15:02:28 Protection size used = 26674839552 (24.8G)

Sep 16 15:02:28

Sep 16 15:02:28 Non-base size used = 26674839552 (24.8G)

Sep 16 15:02:28

Sep 16 15:02:28 Family size used = 646213713920 (601.8G)

Sep 16 15:02:28

Sep 16 15:02:28 Snapshot count = 2

Sep 16 15:02:28

Sep 16 15:02:28 Family snapshot count = 2

Sep 16 15:02:28

Sep 16 15:02:28 Family thin clone count = 0

Sep 16 15:02:28

Sep 16 15:02:28 Protection schedule =

Sep 16 15:02:28

Sep 16 15:02:28 Protection schedule paused =

Sep 16 15:02:28

Sep 16 15:02:28 SP owner = SPB

Sep 16 15:02:28

Sep 16 15:02:28 Trespassed = no

Sep 16 15:02:28

Sep 16 15:02:28 Version = 6

Sep 16 15:02:28

Sep 16 15:02:28 Block size =

Sep 16 15:02:28

Sep 16 15:02:28 Virtual disk access hosts = Host_1, Host_2, Host_3, Host_4

Sep 16 15:02:28

Sep 16 15:02:28 Host LUN IDs = 9, 9, 9, 5

Sep 16 15:02:28

Sep 16 15:02:28 Snapshots access hosts =

Sep 16 15:02:28

Sep 16 15:02:28 WWN = 60:06:01:60:A2:D0:56:00:9E:21:58:62:84:F0:69:94

Sep 16 15:02:28

Sep 16 15:02:28 Replication destination = no

Sep 16 15:02:28

Sep 16 15:02:28 Creation time = 2022-04-14 13:28:59

Sep 16 15:02:28

Sep 16 15:02:28 Last modified time = 2022-04-14 13:28:59

Sep 16 15:02:28

Sep 16 15:02:28 IO limit =

Sep 16 15:02:28

Sep 16 15:02:28 Effective maximum IOPS = N/A

Sep 16 15:02:28

Sep 16 15:02:28 Effective maximum KBPS = N/A

Sep 16 15:02:56

Sep 16 15:02:56

Sep 16 15:02:56

Sep 16 15:02:56 12: ID = res_18

Sep 16 15:02:56

Sep 16 15:02:56 LUN = sv_18

Sep 16 15:02:56

Sep 16 15:02:56 Name = ADC_Datastore_5_Replicated

Sep 16 15:02:56

Sep 16 15:02:56 Description =

Sep 16 15:02:56

Sep 16 15:02:56 Type = Primary

Sep 16 15:02:56

Sep 16 15:02:56 Base storage resource = res_18

Sep 16 15:02:56

Sep 16 15:02:56 Source =

Sep 16 15:02:56

Sep 16 15:02:56 Original parent =

Sep 16 15:02:56

Sep 16 15:02:56 Health state = OK (5)

Sep 16 15:02:56

Sep 16 15:02:56 Health details = "The component is operating normally. No action is required."

Sep 16 15:02:56

Sep 16 15:02:56 Storage pool ID = pool_1

Sep 16 15:02:56

Sep 16 15:02:56 Storage pool = Pool1

Sep 16 15:02:56

Sep 16 15:02:56 Size = 3221225472000 (2.9T)

Sep 16 15:02:56

Sep 16 15:02:56 Maximum size = 70368744177664 (64.0T)

Sep 16 15:02:56

Sep 16 15:02:56 Thin provisioning enabled = yes

Sep 16 15:02:56

Sep 16 15:02:56 Data Reduction enabled = yes

Sep 16 15:02:56

Sep 16 15:02:56 Data Reduction space saved = 1063541276672 (990.5G)

Sep 16 15:02:56

Sep 16 15:02:56 Data Reduction percent = 57%

Sep 16 15:02:56

Sep 16 15:02:56 Data Reduction ratio = 2.34:1

Sep 16 15:02:56

Sep 16 15:02:56 Advanced deduplication enabled = yes

Sep 16 15:02:56

Sep 16 15:02:56 Current allocation = 685758431232 (638.6G)

Sep 16 15:02:56

Sep 16 15:02:56 Preallocated = 197243912192 (183.6G)

Sep 16 15:02:56

Sep 16 15:02:56 Total Pool Space Used = 794625097728 (740.0G)

Sep 16 15:02:56

Sep 16 15:02:56 Protection size used = 83633733632 (77.8G)

Sep 16 15:02:56

Sep 16 15:02:56 Non-base size used = 83633733632 (77.8G)

Sep 16 15:02:56

Sep 16 15:02:56 Family size used = 794625097728 (740.0G)

Sep 16 15:02:56

Sep 16 15:02:56 Snapshot count = 5

Sep 16 15:02:56

Sep 16 15:02:56 Family snapshot count = 5

Sep 16 15:02:56

Sep 16 15:02:56 Family thin clone count = 0

Sep 16 15:02:56

Sep 16 15:02:56 Protection schedule = snapSch_4

Sep 16 15:02:56

Sep 16 15:02:56 Protection schedule paused = no

Sep 16 15:02:56

Sep 16 15:02:56 SP owner = SPA

Sep 16 15:02:56

Sep 16 15:02:56 Trespassed = no

Sep 16 15:02:56

Sep 16 15:02:56 Version = 6

Sep 16 15:02:56

Sep 16 15:02:56 Block size =

Sep 16 15:02:56

Sep 16 15:02:56 Virtual disk access hosts = Host_1, Host_2, Host_3, Host_4

Sep 16 15:02:56

Sep 16 15:02:56 Host LUN IDs = 10, 10, 10, 7

Sep 16 15:02:56

Sep 16 15:02:56 Snapshots access hosts =

Sep 16 15:02:56

Sep 16 15:02:56 WWN = 60:06:01:60:A2:D0:56:00:77:89:77:63:4D:56:44:00

Sep 16 15:02:56

Sep 16 15:02:56 Replication destination = no

Sep 16 15:02:56

Sep 16 15:02:56 Creation time = 2022-11-18 13:32:35

Sep 16 15:02:56

Sep 16 15:02:56 Last modified time = 2025-09-01 11:18:21

Sep 16 15:02:56

Sep 16 15:02:56 IO limit =

Sep 16 15:02:56

Sep 16 15:02:56 Effective maximum IOPS = N/A

Sep 16 15:02:56

Sep 16 15:02:56 Effective maximum KBPS = N/A

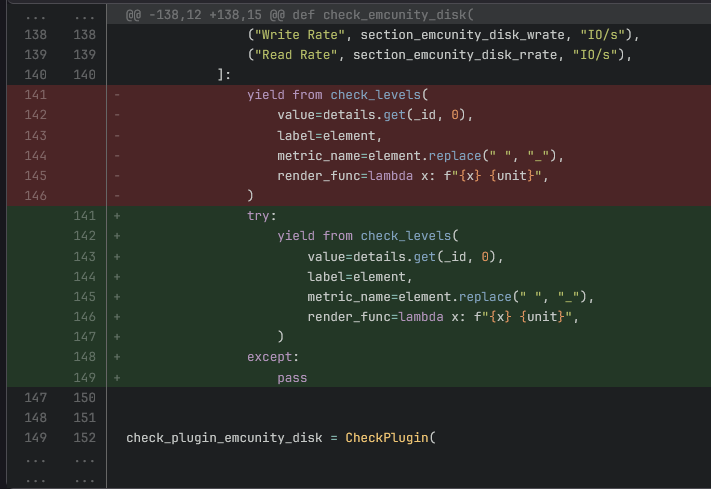

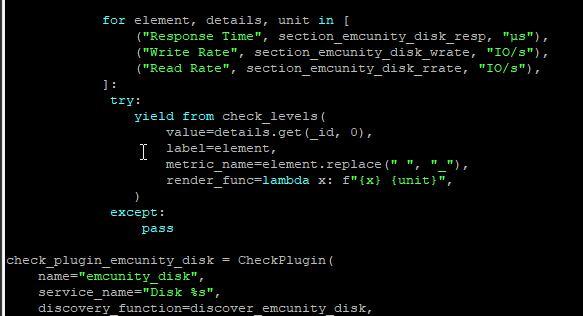

If I disable that piece by commenting it out like you suggested, the 30 sec jump occurs somewhere else…

Sep 16 15:10:29 <<<emcunity_hostcons:sep(61)>>>

Sep 16 15:10:29 1: ID = Host_1

Sep 16 15:10:29

Sep 16 15:10:29 Name = esxi4

Sep 16 15:10:29

Sep 16 15:10:29 Description =

Sep 16 15:10:29

Sep 16 15:10:29 Tenant =

Sep 16 15:10:29

Sep 16 15:10:29 Type = host

Sep 16 15:10:29

Sep 16 15:10:29 Address = 10.127.0.185,10.254.127.74,10.254.128.74,10.255.0.1

Sep 16 15:10:29

Sep 16 15:10:29 Netmask =

Sep 16 15:10:29

Sep 16 15:10:29 OS type = VMware ESXi 8.0.3

Sep 16 15:10:29

Sep 16 15:10:29 Ignored address =

Sep 16 15:10:29

Sep 16 15:10:29 Management type = VMware

Sep 16 15:10:29

Sep 16 15:10:29 Accessible LUNs = sv_1,sv_2,sv_4,sv_5,sv_9,sv_7,sv_8,sv_12,sv_13,sv_14,sv_18,sv_19

Sep 16 15:10:29

Sep 16 15:10:29 Host LUN IDs = 0,1,2,3,4,5,6,7,8,9,10,11

Sep 16 15:10:29

Sep 16 15:10:29 Host group =

Sep 16 15:10:29

Sep 16 15:10:29 Health state = OK (5)

Sep 16 15:10:29

Sep 16 15:10:29 Health details = "The component is operating normally. No action is required."

Sep 16 15:10:29

Sep 16 15:10:29

Sep 16 15:10:29

Sep 16 15:10:29 2: ID = Host_2

Sep 16 15:10:29

Sep 16 15:10:29 Name = esxi5

Sep 16 15:11:01

Sep 16 15:11:01 Description =

Sep 16 15:11:01

Sep 16 15:11:01 Tenant =

Sep 16 15:11:01

Sep 16 15:11:01 Type = host

Sep 16 15:11:01

Sep 16 15:11:01 Address = 10.127.0.186,10.254.127.75,10.254.128.75,10.255.0.3

Sep 16 15:11:01

Sep 16 15:11:01 Netmask =

Sep 16 15:11:01

Sep 16 15:11:01 OS type = VMware ESXi 8.0.3

Sep 16 15:11:01

Sep 16 15:11:01 Ignored address =

Sep 16 15:11:01

Sep 16 15:11:01 Management type = VMware

Sep 16 15:11:01

Sep 16 15:11:01 Accessible LUNs = sv_1,sv_2,sv_4,sv_5,sv_9,sv_7,sv_8,sv_12,sv_13,sv_14,sv_18,sv_19

Sep 16 15:11:01

Sep 16 15:11:01 Host LUN IDs = 0,1,2,3,4,5,6,7,8,9,10,11

Sep 16 15:11:01

Sep 16 15:11:01 Host group =

Sep 16 15:11:01

Sep 16 15:11:01 Health state = OK (5)

Sep 16 15:11:01

Sep 16 15:11:01 Health details = "The component is operating normally. No action is required."