Here is my pool

# zpool status

pool: Vol-10TB-6x2-RAIDZ1-1

state: DEGRADED

status: One or more devices are faulted in response to persistent errors.

Sufficient replicas exist for the pool to continue functioning in a

degraded state.

action: Replace the faulted device, or use 'zpool clear' to mark the device

repaired.

scan: scrub repaired 0B in 1 days 12:52:35 with 0 errors on Mon Feb 17 12:52:37 2025

config:

NAME STATE READ WRITE CKSUM

Vol-10TB-6x2-RAIDZ1-1 DEGRADED 0 0 0

raidz1-0 DEGRADED 0 0 0

gptid/9d640fd2-8483-11ef-a549-1402ec8c19c5 ONLINE 0 0 0

gptid/5d82c98a-e1d8-11e8-baf0-6cb3113b92f4 FAULTED 7 84 0 too many errors

gptid/5e0e19e0-e1d8-11e8-baf0-6cb3113b92f4 ONLINE 0 0 0

gptid/5e981951-e1d8-11e8-baf0-6cb3113b92f4 ONLINE 0 0 0

gptid/5f263ddb-e1d8-11e8-baf0-6cb3113b92f4 ONLINE 0 0 0

gptid/5fb1d0d2-e1d8-11e8-baf0-6cb3113b92f4 ONLINE 0 0 0

raidz1-1 ONLINE 0 0 0

gptid/606e2af3-e1d8-11e8-baf0-6cb3113b92f4 ONLINE 0 0 0

gptid/25d99864-35be-11e9-93f8-6cb3113b92f4 ONLINE 0 0 0

gptid/622c7b9d-e1d8-11e8-baf0-6cb3113b92f4 ONLINE 0 0 0

gptid/6365795a-e1d8-11e8-baf0-6cb3113b92f4 ONLINE 0 0 0

gptid/64b345aa-e1d8-11e8-baf0-6cb3113b92f4 ONLINE 0 0 0

gptid/65dbb6ba-e1d8-11e8-baf0-6cb3113b92f4 ONLINE 0 0 0

errors: No known data errors

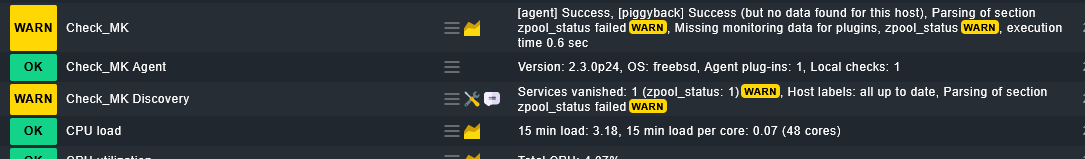

Here is CheckMK (raw 2.3.0p26)

debug:

$ cmk --debug -nv --checks=zpool_status SERVER

WARNING: '--checks' is deprecated in favour of option 'detect-plugins'

+ FETCHING DATA

Traceback (most recent call last):

File "/omd/sites/companysite/bin/cmk", line 124, in <module>

exit_status = modes.call("--check", None, opts, args)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/omd/sites/companysite/lib/python3/cmk/base/modes/__init__.py", line 70, in call

return handler(*handler_args)

^^^^^^^^^^^^^^^^^^^^^^

File "/omd/sites/companysite/lib/python3/cmk/base/modes/check_mk.py", line 2163, in mode_check

with (

File "/omd/sites/companysite/lib/python3/cmk/base/errorhandling/_handler.py", line 66, in __exit__

*_handle_failure(

^^^^^^^^^^^^^^^^

File "/omd/sites/companysite/lib/python3/cmk/base/errorhandling/_handler.py", line 103, in _handle_failure

raise exc

File "/omd/sites/companysite/lib/python3/cmk/base/modes/check_mk.py", line 2179, in mode_check

checks_result = execute_checkmk_checks(

^^^^^^^^^^^^^^^^^^^^^^^

File "/omd/sites/companysite/lib/python3/cmk/checkengine/checking/_checking.py", line 87, in execute_checkmk_checks

service_results = list(

^^^^^

File "/omd/sites/companysite/lib/python3/cmk/checkengine/checking/_checking.py", line 224, in check_host_services

yield plugin.function(host_name, service, providers=providers)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/omd/sites/companysite/lib/python3/cmk/base/checkers.py", line 443, in check_function

return get_aggregated_result(

^^^^^^^^^^^^^^^^^^^^^^

File "/omd/sites/companysite/lib/python3/cmk/base/checkers.py", line 708, in get_aggregated_result

section_kws, error_result = _get_monitoring_data_kwargs(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/omd/sites/companysite/lib/python3/cmk/base/checkers.py", line 629, in _get_monitoring_data_kwargs

get_section_kwargs(

File "/omd/sites/companysite/lib/python3/cmk/checkengine/sectionparserutils.py", line 42, in get_section_kwargs

if (resolved := resolver.resolve(name)) is not None

^^^^^^^^^^^^^^^^^^^^^^

File "/omd/sites/companysite/lib/python3/cmk/checkengine/sectionparser.py", line 185, in resolve

parsing_result := self._parser.parse(producer_name, producer.parse_function)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/omd/sites/companysite/lib/python3/cmk/checkengine/sectionparser.py", line 94, in parse

if (parsed := self._parse_raw_data(section_name, parse_function)) is None

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/omd/sites/companysite/lib/python3/cmk/checkengine/sectionparser.py", line 117, in _parse_raw_data

return parse_function(list(raw_data))

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/omd/sites/companysite/lib/python3/cmk/base/plugins/agent_based/zpool_status.py", line 91, in parse_zpool_status

if line[1] != "ONLINE":

~~~~^^^

IndexError: list index out of range

![]()